Within the ongoing effort to make AI extra like people, OpenAI’s GPT fashions have frequently pushed the boundaries. GPT-4 is now in a position to settle for prompts of each textual content and pictures.

Multimodality in generative AI denotes a mannequin’s functionality to supply diversified outputs like textual content, pictures, or audio primarily based on the enter. These fashions, skilled on particular information, be taught underlying patterns to generate related new information, enriching AI functions.

Latest Strides in Multimodal AI

A current notable leap on this discipline is seen with the mixing of DALL-E 3 into ChatGPT, a big improve in OpenAI’s text-to-image know-how. This mix permits for a smoother interplay the place ChatGPT aids in crafting exact prompts for DALL-E 3, turning person concepts into vivid AI-generated artwork. So, whereas customers can straight work together with DALL-E 3, having ChatGPT within the combine makes the method of making AI artwork way more user-friendly.

Try extra on DALL-E 3 and its integration with ChatGPT right here. This collaboration not solely showcases the development in multimodal AI but additionally makes AI artwork creation a breeze for customers.

https://openai.com/dall-e-3

Google’s well being then again launched Med-PaLM M in June this 12 months. It’s a multimodal generative mannequin adept at encoding and decoding various biomedical information. This was achieved by fine-tuning PaLM-E, a language mannequin, to cater to medical domains using an open-source benchmark, MultiMedBench. This benchmark, consists of over 1 million samples throughout 7 biomedical information sorts and 14 duties like medical question-answering and radiology report technology.

Varied industries are adopting revolutionary multimodal AI instruments to gas enterprise enlargement, streamline operations, and elevate buyer engagement. Progress in voice, video, and textual content AI capabilities is propelling multimodal AI’s development.

Enterprises search multimodal AI functions able to overhauling enterprise fashions and processes, opening development avenues throughout the generative AI ecosystem, from information instruments to rising AI functions.

Submit GPT-4’s launch in March, some customers noticed a decline in its response high quality over time, a priority echoed by notable builders and on OpenAI’s boards. Initially dismissed by an OpenAI, a later examine confirmed the difficulty. It revealed a drop in GPT-4’s accuracy from 97.6% to 2.4% between March and June, indicating a decline in reply high quality with subsequent mannequin updates.

ChatGPT (Blue) & Synthetic intelligence (Pink) Google Search Development

The hype round Open AI’s ChatGPT is again now. It now comes with a imaginative and prescient characteristic GPT-4V, permitting customers to have GPT-4 analyze pictures given by them. That is the most recent characteristic that is been opened as much as customers.

Including picture evaluation to massive language fashions (LLMs) like GPT-4 is seen by some as an enormous step ahead in AI analysis and improvement. This sort of multimodal LLM opens up new prospects, taking language fashions past textual content to supply new interfaces and clear up new sorts of duties, creating recent experiences for customers.

The coaching of GPT-4V was completed in 2022, with early entry rolled out in March 2023. The visible characteristic in GPT-4V is powered by GPT-4 tech. The coaching course of remained the identical. Initially, the mannequin was skilled to foretell the following phrase in a textual content utilizing an enormous dataset of each textual content and pictures from varied sources together with the web.

Later, it was fine-tuned with extra information, using a way named reinforcement studying from human suggestions (RLHF), to generate outputs that people most well-liked.

GPT-4 Imaginative and prescient Mechanics

GPT-4’s outstanding imaginative and prescient language capabilities, though spectacular, have underlying strategies that is still on the floor.

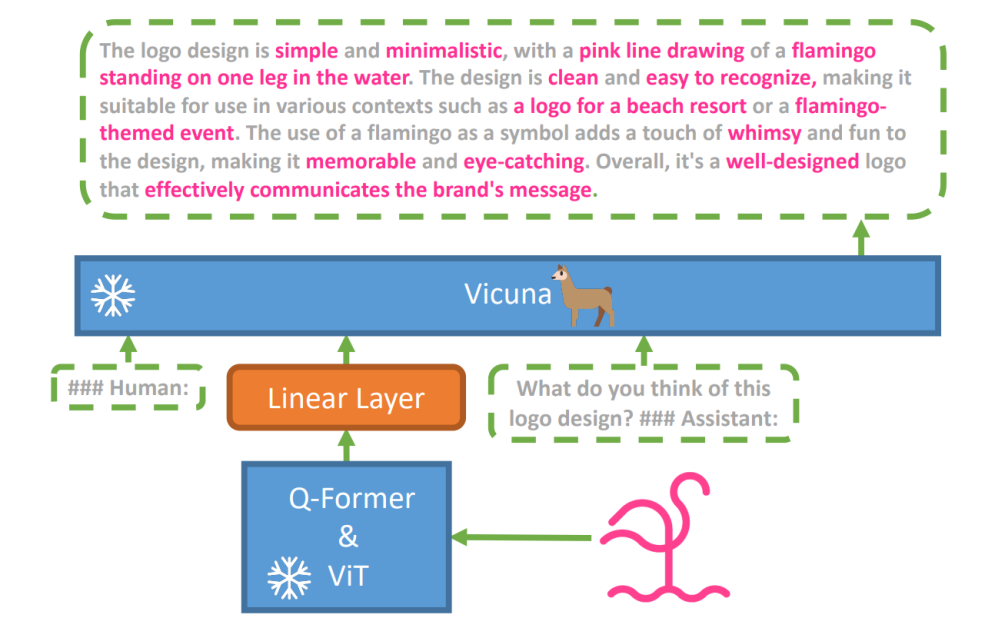

To discover this speculation, a brand new vision-language mannequin, MiniGPT-4 was launched, using a sophisticated LLM named Vicuna. This mannequin makes use of a imaginative and prescient encoder with pre-trained elements for visible notion, aligning encoded visible options with the Vicuna language mannequin by means of a single projection layer. The structure of MiniGPT-4 is easy but efficient, with a concentrate on aligning visible and language options to enhance visible dialog capabilities.

MiniGPT-4’s structure features a imaginative and prescient encoder with pre-trained ViT and Q-Former, a single linear projection layer, and a sophisticated Vicuna massive language mannequin.

The pattern of autoregressive language fashions in vision-language duties has additionally grown, capitalizing on cross-modal switch to share information between language and multimodal domains.

MiniGPT-4 bridge the visible and language domains by aligning visible data from a pre-trained imaginative and prescient encoder with a sophisticated LLM. The mannequin makes use of Vicuna because the language decoder and follows a two-stage coaching strategy. Initially, it is skilled on a big dataset of image-text pairs to understand vision-language information, adopted by fine-tuning on a smaller, high-quality dataset to boost technology reliability and usefulness.

To enhance the naturalness and usefulness of generated language in MiniGPT-4, researchers developed a two-stage alignment course of, addressing the dearth of enough vision-language alignment datasets. They curated a specialised dataset for this objective.

Initially, the mannequin generated detailed descriptions of enter pictures, enhancing the element through the use of a conversational immediate aligned with Vicuna language mannequin’s format. This stage aimed toward producing extra complete picture descriptions.

Preliminary Picture Description Immediate:

###Human: <Img><ImageFeature></Img>Describe this picture intimately. Give as many particulars as potential. Say all the things you see. ###Assistant:

For information post-processing, any inconsistencies or errors within the generated descriptions had been corrected utilizing ChatGPT, adopted by guide verification to make sure top quality.

Second-Stage Advantageous-tuning Immediate:

###Human: <Img><ImageFeature></Img><Instruction>###Assistant:

This exploration opens a window into understanding the mechanics of multimodal generative AI like GPT-4, shedding mild on how imaginative and prescient and language modalities might be successfully built-in to generate coherent and contextually wealthy outputs.

Exploring GPT-4 Imaginative and prescient

Figuring out Picture Origins with ChatGPT

GPT-4 Imaginative and prescient enhances ChatGPT’s capacity to investigate pictures and pinpoint their geographical origins. This characteristic transitions person interactions from simply textual content to a mixture of textual content and visuals, changing into a useful instrument for these interested in completely different locations by means of picture information.

Asking ChatGPT the place a Landmark Picture is taken

Complicated Math Ideas

GPT-4 Imaginative and prescient excels in delving into advanced mathematical concepts by analyzing graphical or handwritten expressions. This characteristic acts as a useful gizmo for people seeking to clear up intricate mathematical issues, marking GPT-4 Imaginative and prescient a notable assist in academic and educational fields.

Asking ChatGPT to know a fancy math idea

Changing Handwritten Enter to LaTeX Codes

One in all GPT-4V’s outstanding talents is its functionality to translate handwritten inputs into LaTeX codes. This characteristic is a boon for researchers, teachers, and college students who usually have to convert handwritten mathematical expressions or different technical data right into a digital format. The transformation from handwritten to LaTeX expands the horizon of doc digitization and simplifies the technical writing course of.

GPT-4V’s capacity to transform handwritten enter into LaTeX codes

Extracting Desk Particulars

GPT-4V showcases ability in extracting particulars from tables and addressing associated inquiries, an important asset in information evaluation. Customers can make the most of GPT-4V to sift by means of tables, collect key insights, and resolve data-driven questions, making it a strong instrument for information analysts and different professionals.

GPT-4V deciphering desk particulars and responding to associated queries

Comprehending Visible Pointing

The distinctive capacity of GPT-4V to understand visible pointing provides a brand new dimension to person interplay. By understanding visible cues, GPT-4V can reply to queries with the next contextual understanding.

GPT-4V showcases the distinct capacity to understand visible pointing

Constructing Easy Mock-Up Web sites utilizing a drawing

Motivated by this tweet, I tried to create a mock-up for the unite.ai web site.

Whereas the end result did not fairly match my preliminary imaginative and prescient, this is the end result I achieved.

ChatGPT Imaginative and prescient primarily based output HTML Frontend

Limitations & Flaws of GPT-4V(ision)

To research GPT-4V, Open AI crew carried qualitative and quantitative assessments. Qualitative ones included inner assessments and exterior knowledgeable opinions, whereas quantitative ones measured mannequin refusals and accuracy in varied eventualities comparable to figuring out dangerous content material, demographic recognition, privateness considerations, geolocation, cybersecurity, and multimodal jailbreaks.

Nonetheless the mannequin shouldn’t be excellent.

The paper highlights limitations of GPT-4V, like incorrect inferences and lacking textual content or characters in pictures. It could hallucinate or invent information. Notably, it is not fitted to figuring out harmful substances in pictures, usually misidentifying them.

In medical imaging, GPT-4V can present inconsistent responses and lacks consciousness of ordinary practices, resulting in potential misdiagnoses.

Unreliable efficiency for medical functions (Supply)

It additionally fails to understand the nuances of sure hate symbols and will generate inappropriate content material primarily based on the visible inputs. OpenAI advises towards utilizing GPT-4V for important interpretations, particularly in medical or delicate contexts.

The arrival of GPT-4 Imaginative and prescient (GPT-4V) brings alongside a bunch of cool prospects and new hurdles to leap over. Earlier than rolling it out, numerous effort has gone into ensuring dangers, particularly in the case of photos of individuals, are properly seemed into and lowered. It is spectacular to see how GPT-4V has stepped up, exhibiting numerous promise in difficult areas like drugs and science.

Now, there are some huge questions on the desk. For example, ought to these fashions have the ability to determine well-known people from images? Ought to they guess an individual’s gender, race, or emotions from an image? And, ought to there be particular tweaks to assist visually impaired people? These questions open up a can of worms about privateness, equity, and the way AI ought to match into our lives, which is one thing everybody ought to have a say in.