Giant language fashions (LLMs) like OpenAI’s GPT sequence have been educated on a various vary of publicly accessible knowledge, demonstrating exceptional capabilities in textual content era, summarization, query answering, and planning. Regardless of their versatility, a ceaselessly posed query revolves across the seamless integration of those fashions with customized, personal or proprietary knowledge.

Companies and people are flooded with distinctive and customized knowledge, usually housed in varied functions equivalent to Notion, Slack, and Salesforce, or saved in private information. To leverage LLMs for this particular knowledge, a number of methodologies have been proposed and experimented with.

Superb-tuning represents one such strategy, it consist adjustment of the mannequin’s weights to include data from explicit datasets. Nevertheless, this course of is not with out its challenges. It calls for substantial effort in knowledge preparation, coupled with a troublesome optimization process, necessitating a sure degree of machine studying experience. Furthermore, the monetary implications may be vital, notably when coping with massive datasets.

In-context studying has emerged as a substitute, prioritizing the crafting of inputs and prompts to offer the LLM with the required context for producing correct outputs. This strategy mitigates the necessity for in depth mannequin retraining, providing a extra environment friendly and accessible technique of integrating personal knowledge.

However the disadvantage for that is its reliance on the ability and experience of the consumer in immediate engineering. Moreover, in-context studying might not all the time be as exact or dependable as fine-tuning, particularly when coping with extremely specialised or technical knowledge. The mannequin’s pre-training on a broad vary of web textual content doesn’t assure an understanding of particular jargon or context, which may result in inaccurate or irrelevant outputs. That is notably problematic when the personal knowledge is from a distinct segment area or business.

Furthermore, the quantity of context that may be supplied in a single immediate is proscribed, and the LLM’s efficiency might degrade because the complexity of the duty will increase. There may be additionally the problem of privateness and knowledge safety, as the knowledge supplied within the immediate may doubtlessly be delicate or confidential.

Because the neighborhood explores these strategies, instruments like LlamaIndex at the moment are gaining consideration.

Llama Index

It was began by Jerry Liu, a former Uber analysis scientist. Whereas experimenting round with GPT-3 final fall, Liu observed the mannequin’s limitations regarding dealing with personal knowledge, equivalent to private information. This commentary led to the beginning of the open-source challenge LlamaIndex.

The initiative has attracted buyers, securing $8.5 million in a latest seed funding spherical.

LlamaIndex facilitates the augmentation of LLMs with customized knowledge, bridging the hole between pre-trained fashions and customized knowledge use-cases. Via LlamaIndex, customers can leverage their very own knowledge with LLMs, unlocking data era and reasoning with customized insights.

Customers can seamlessly present LLMs with their very own knowledge, fostering an surroundings the place data era and reasoning are deeply customized and insightful. LlamaIndex addresses the constraints of in-context studying by offering a extra user-friendly and safe platform for knowledge interplay, guaranteeing that even these with restricted machine studying experience can leverage the total potential of LLMs with their personal knowledge.

1. Retrieval Augmented Era (RAG):

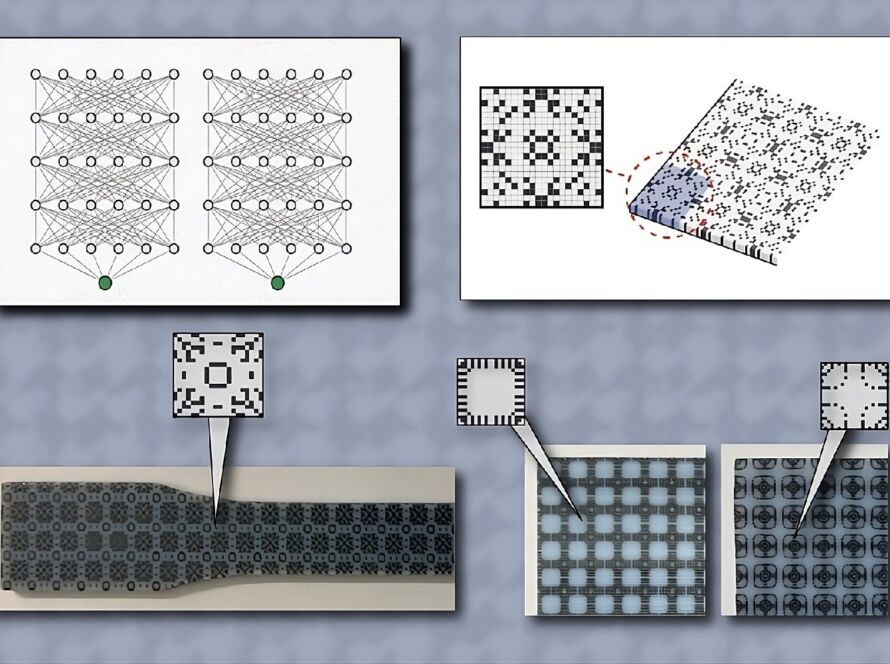

LlamaIndex RAG

RAG is a two-fold course of designed to couple LLMs with customized knowledge, thereby enhancing the mannequin’s capability to ship extra exact and knowledgeable responses. The method includes:

- Indexing Stage: That is the preparatory section the place the groundwork for data base creation is laid.

LlamaIndex Indexing

- Querying Stage: Right here, the data base is scoured for related context to help LLMs in answering queries.

LlamaIndex Question Stage

Indexing Journey with LlamaIndex:

- Information Connectors: Consider knowledge connectors as your knowledge’s passport to LlamaIndex. They assist in importing knowledge from diversified sources and codecs, encapsulating them right into a simplistic ‘Doc’ illustration. Information connectors may be discovered inside LlamaHub, an open-source repository crammed with knowledge loaders. These loaders are crafted for straightforward integration, enabling a plug-and-play expertise with any LlamaIndex utility.

LlamaIndex hub (https://llamahub.ai/)

- Paperwork / Nodes: A Doc is sort of a generic suitcase that may maintain various knowledge varieties—be it a PDF, API output, or database entries. However, a Node is a snippet or “chunk” from a Doc, enriched with metadata and relationships to different nodes, guaranteeing a strong basis for exact knowledge retrieval afterward.

- Information Indexes: Submit knowledge ingestion, LlamaIndex assists in indexing this knowledge right into a retrievable format. Behind the scenes, it dissects uncooked paperwork into intermediate representations, computes vector embeddings, and deduces metadata. Among the many indexes, ‘VectorStoreIndex’ is usually the go-to selection.

Kinds of Indexes in LlamaIndex: Key to Organized Information

LlamaIndex affords various kinds of index, every for various wants and use instances. On the core of those indices lie “nodes” as mentioned above. Let’s attempt to perceive LlamaIndex indices with their mechanics and functions.

1. Checklist Index:

- Mechanism: A Checklist Index aligns nodes sequentially like a listing. Submit chunking the enter knowledge into nodes, they’re organized in a linear trend, able to be queried both sequentially or by way of key phrases or embeddings.

- Benefit: This index kind shines when the necessity is for sequential querying. LlamaIndex ensures utilization of your total enter knowledge, even when it surpasses the LLM’s token restrict, by neatly querying textual content from every node and refining solutions because it navigates down the listing.

2. Vector Retailer Index:

- Mechanism: Right here, nodes rework into vector embeddings, saved both regionally or in a specialised vector database like Milvus. When queried, it fetches the top_k most related nodes, channeling them to the response synthesizer.

- Benefit: In case your workflow will depend on textual content comparability for semantic similarity by way of vector search, this index can be utilized.

3. Tree Index:

- Mechanism: In a Tree Index, the enter knowledge evolves right into a tree construction, constructed bottom-up from leaf nodes (the unique knowledge chunks). Guardian nodes emerge as summaries of leaf nodes, crafted utilizing GPT. Throughout a question, the tree index can traverse from the basis node to leaf nodes or assemble responses immediately from chosen leaf nodes.

- Benefit: With a Tree Index, querying lengthy textual content chunks turns into extra environment friendly, and extracting info from varied textual content segments is simplified.

4. Key phrase Index:

- Mechanism: A map of key phrases to nodes varieties the core of a Key phrase Index.When queried, key phrases are plucked from the question, and solely the mapped nodes are introduced into the highlight.

- Benefit: When you have got a transparent consumer queries, a Key phrase Index can be utilized. For instance, sifting via healthcare paperwork turns into extra environment friendly when solely zeroing in on paperwork pertinent to COVID-19.

Putting in LlamaIndex

Putting in LlamaIndex is a simple course of. You may select to put in it both immediately from Pip or from the supply. ( Make certain to have python put in in your system or you should utilize Google Colab)

1. Set up from Pip:

- Execute the next command:

- Word: Throughout set up, LlamaIndex might obtain and retailer native information for sure packages like NLTK and HuggingFace. To specify a listing for these information, use the “LLAMA_INDEX_CACHE_DIR” surroundings variable.

2. Set up from Supply:

- First, clone the LlamaIndex repository from GitHub:

git clone https://github.com/jerryjliu/llama_index.git - As soon as cloned, navigate to the challenge listing.

- You will want Poetry for managing bundle dependencies.

- Now, create a digital surroundings utilizing Poetry:

- Lastly, set up the core bundle necessities with:

Setting Up Your Surroundings for LlamaIndex

1. OpenAI Setup:

- By default, LlamaIndex makes use of OpenAI’s

gpt-3.5-turbofor textual content era andtext-embedding-ada-002for retrieval and embeddings. - To make use of this setup, you will must have an

OPENAI_API_KEY. Get one by registering at OpenAI’s web site and creating a brand new API token. - You’ve gotten the pliability to customise the underlying Giant Language Mannequin (LLM) as per your challenge wants. Relying in your LLM supplier, you may want extra surroundings keys and tokens.

2. Native Surroundings Setup:

- If you happen to want to not use OpenAI, LlamaIndex routinely switches to native fashions –

LlamaCPPandllama2-chat-13Bfor textual content era, andBAAI/bge-small-enfor retrieval and embeddings. - To make use of

LlamaCPP, comply with the supplied set up information. Guarantee to put in thellama-cpp-pythonbundle, ideally compiled to help your GPU. This setup will make the most of round 11.5GB of reminiscence throughout the CPU and GPU. - For native embeddings, execute

pip set up sentence-transformers. This native setup will use about 500MB of reminiscence.

With these setups, you may tailor your surroundings to both leverage the ability of OpenAI or run fashions regionally, aligning along with your challenge necessities and sources.

A easy Usecase: Querying Webpages with LlamaIndex and OpenAI

This is a easy Python script to reveal how one can question a webpage for particular insights:

!pip set up llama-index html2text

import os

from llama_index import VectorStoreIndex, SimpleWebPageReader

# Enter your OpenAI key under:

os.environ["OPENAI_API_KEY"] = ""

# URL you need to load into your vector retailer right here:

url = "http://www.paulgraham.com/fr.html"

# Load the URL into paperwork (a number of paperwork doable)

paperwork = SimpleWebPageReader(html_to_text=True).load_data([url])

# Create vector retailer from paperwork

index = VectorStoreIndex.from_documents(paperwork)

# Create question engine so we will ask it questions:

query_engine = index.as_query_engine()

# Ask as many questions as you need in opposition to the loaded knowledge:

response = query_engine.question("What are the three finest advise by Paul to boost cash?")

print(response)

The three finest items of recommendation by Paul to boost cash are: 1. Begin with a low quantity when initially elevating cash. This permits for flexibility and will increase the probabilities of elevating extra funds in the long term. 2. Goal to be worthwhile if doable. Having a plan to achieve profitability with out counting on extra funding makes the startup extra engaging to buyers. 3. Do not optimize for valuation. Whereas valuation is essential, it's not essentially the most essential consider fundraising. Concentrate on getting the required funds and discovering good buyers as an alternative.

Google Colab Llama Index Pocket book

With this script, you’ve created a robust device to extract particular info from a webpage by merely asking a query. That is only a glimpse of what may be achieved with LlamaIndex and OpenAI when querying net knowledge.

LlamaIndex vs Langchain: Selecting Based mostly on Your Aim

Your selection between LlamaIndex and Langchain will rely in your challenge’s goal. If you wish to develop an clever search device, LlamaIndex is a stable decide, excelling as a sensible storage mechanism for knowledge retrieval. On the flip aspect, if you wish to create a system like ChatGPT with plugin capabilities, Langchain is your go-to. It not solely facilitates a number of cases of ChatGPT and LlamaIndex but in addition expands performance by permitting the development of multi-task brokers. As an example, with Langchain, you may create brokers able to executing Python code whereas conducting a Google search concurrently. In brief, whereas LlamaIndex excels at knowledge dealing with, Langchain orchestrates a number of instruments to ship a holistic answer.

LlamaIndex Emblem Paintings created utilizing Midjourney